The Simple Question That Breaks AI

Three years ago, John was 30.What’s his age today?

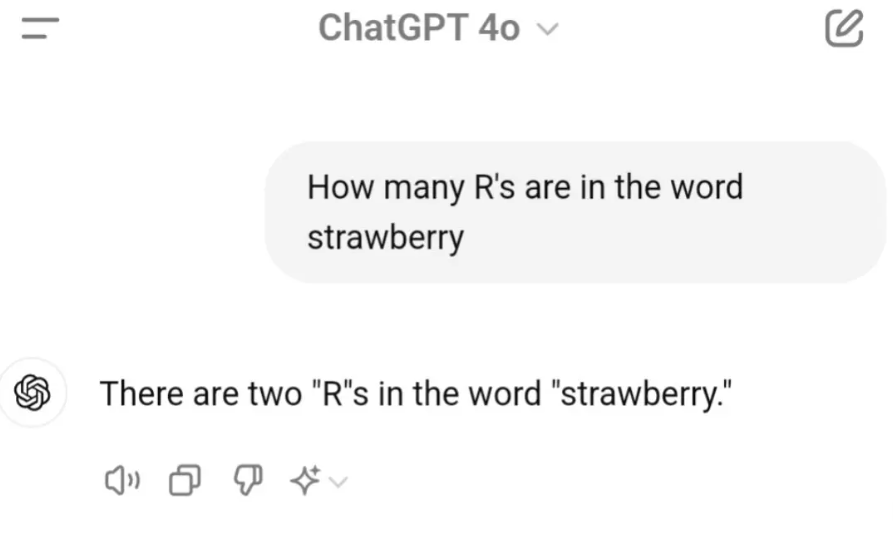

Ask a 5-year-old, and you’ll get a confident “33.”Ask a cutting-edge LLM? You might hear, “John is still 30.”

That’s not a joke. I ran this prompt through multiple local models, including Cogito 3B and a couple of community-favorite LLMs. Two froze mid-reasoning. One hallucinated. Another confidently clung to “30” as if time itself had paused for John Smith. I had to force stop the models before they spiraled into existential loops.

That’s when I stumbled upon Apple’s quietly released research:“Reasoning in Large Language Models: A Structural Examination of LRMs”

This wasn’t just a paper. It was a mirror held up to our collective AI hype.

Apple’s Quiet Bombshell

The research doesn’t scream headlines. But if you read between the lines, the message is brutal:

Large Reasoning Models (LRMs) aren’t reasoning. They’re rehearsing — and they stumble once the stage changes.

Apple’s team didn’t just test for final answer accuracy (the usual game of solving math or code questions). Instead, they controlled compositional complexity — adjusting the logic of puzzles while holding structure steady. This allowed them to peer inside the how, not just the what.

The findings?

1. Reasoning collapses at complexity: As puzzles grow more layered, models hit a point where thinking efforts don’t rise — they shrink. The AI starts doing less when asked for more.

2. Surprising underdogs: On simple tasks, old-school LLMs (with no fancy reasoning prompts) often outperformed the so-called smarter LRMs. Because brute fluency > half-baked logic.

3. Three-tiered failure curve:

Simple tasks → LLMs win.

Medium tasks → LRMs shine with their verbose reasoning.

Hard tasks → Both fall apart. Sometimes poetically.

4. Inconsistent computation: Models don’t follow stable algorithms. Ask them to solve similar puzzles with tiny differences? Expect wildly different approaches. Like solving one with algebra and the next with vibes.

My John Smith Moment

I didn’t need a research lab to feel this.

I asked a 3B LLM to solve:“John was 30 years old 3 years ago. What’s his age now?”

First response:

“John is 30.”

Second response:

“John is still 30 because 3 years ago, he was 30.”

No reasoning. Just repetition.It was like watching a parrot misquote Socrates.

I added a prompt to “think step-by-step.”It generated a four-line explanation — all correct-sounding — ending again with: “John is 30.”

In other words, the reasoning trace sounded intelligent but led nowhere.

Apple’s paper helped me decode this:These models simulate reasoning — they don’t execute it.

So What Do We Do With This?

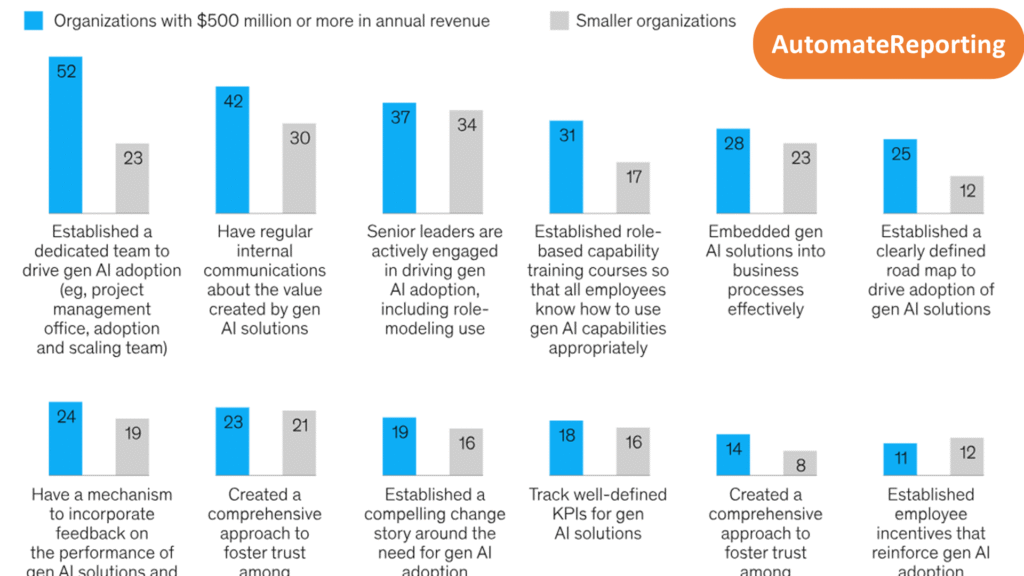

If you’re a leader relying on LLMs for decision-support, this should be your wake-up call.

The future of AI isn’t just in scaling up — it’s in slowing down.Tracing how a model thinks. Catching the wrong steps before they become decisions.

Right now, we’re betting billions on models that sound wise — but can’t age John by three years.

Final Thought: Are We Building Thinkers or Talkers?

The next time you hear an LLM explain something with confidence, pause.Ask yourself: Is it thinking? Or just echoing the patterns of people who once did?

And if John’s still 30 in that world — maybe we’re the ones who need to grow up.

Key Takeaways

Apple’s research reveals that Large Reasoning Models simulate rather than execute true reasoning

Simple arithmetic problems expose fundamental flaws in AI reasoning capabilities

Complexity scaling shows inverse relationship between task difficulty and model performance

Business leaders should implement reasoning verification systems before relying on AI decisions

The AI industry needs to focus on reasoning quality, not just conversational fluency

Want to stay updated on AI reasoning breakthroughs and failures? Follow for more insights on the reality behind the AI hype.