The 6 Levels of AI That Will Replace Your Job

The 6 Levels of AI That Will Replace Your Job (And How to Stay Ahead)

From Basic Automation to Fully Autonomous Systems – Which Level Is Coming for Your Industry Next?

Artificial Intelligence is rapidly transforming how businesses operate. But not all AI systems are created equal.

Understanding the different levels of AI autonomy can help you make informed decisions. It’s about choosing which AI solutions best fit your organization’s needs.

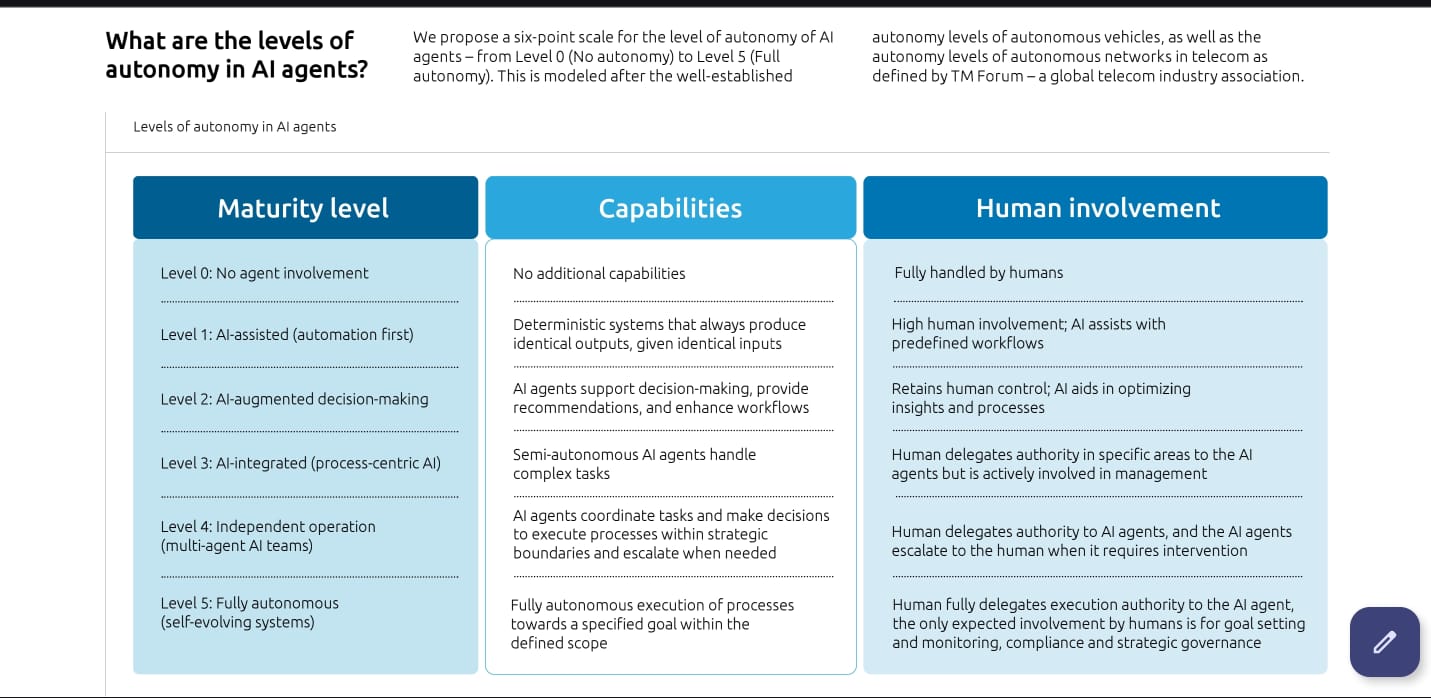

This comprehensive framework breaks down AI agent autonomy into six distinct levels. From basic automation to fully autonomous systems.

Let’s explore each level. Discover how they can impact your business operations.

What is AI Agent Autonomy?

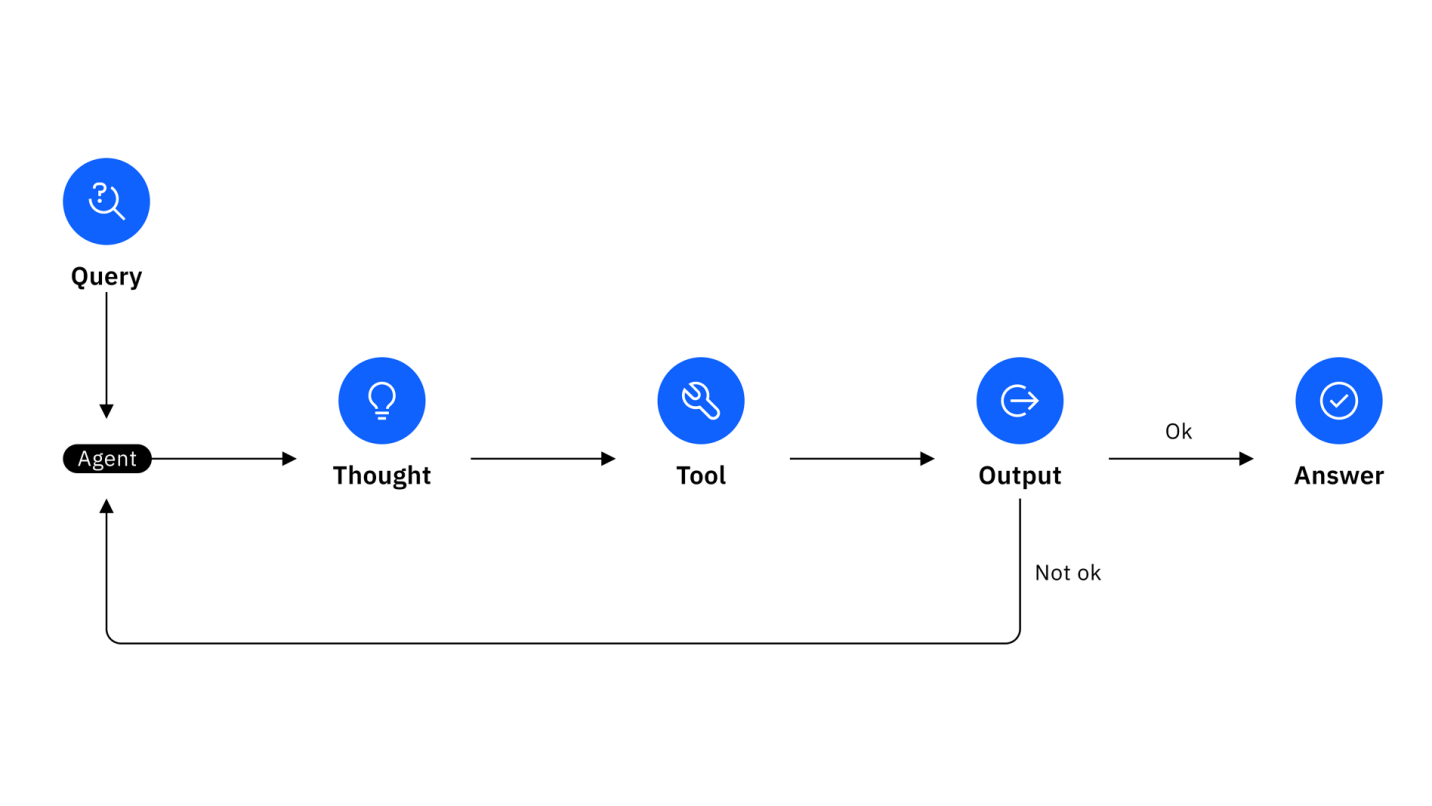

AI agent autonomy refers to the degree of independence an artificial intelligence system has. It’s about making decisions and executing tasks without human intervention.

The higher the autonomy level, the more independently the AI can operate.

This six-point scale draws inspiration from established frameworks. Think autonomous vehicles and telecom networks.

It provides a clear roadmap for understanding AI capabilities across different industries.

Level 0: No Agent Involvement – The Foundation

Maturity Level

At Level 0, there’s no AI agent involvement whatsoever.

This represents traditional, manual processes. They rely entirely on human decision-making and execution.

Key Capabilities

- No additional AI capabilities beyond standard software

- Deterministic systems producing identical outputs from identical inputs

- Complete reliance on pre-programmed logic

Human Involvement

Tasks are fully handled by humans with no AI assistance.

This level serves as the baseline for measuring AI integration progress.

Best for: Organizations just beginning their AI journey. Also processes requiring 100% human oversight.

Level 1: AI-Assisted (Automation First) – The Helper

Maturity Level

Level 1 introduces AI as a supportive tool. It focuses on automation-first approaches that enhance human productivity.

Key Capabilities

- Deterministic systems with consistent, predictable outcomes

- Basic automation of repetitive tasks

- Simple pattern recognition and data processing

Human Involvement

High human involvement where AI assists with predefined workflows.

Humans maintain complete control. AI handles routine tasks.

Examples:

- Email filtering and sorting

- Basic data entry automation

- Simple chatbot responses

Best for: Teams looking to reduce manual workload. Without changing existing processes.

Level 2: AI-Augmented Decision-Making – The Advisor

Maturity Level

At Level 2, AI systems begin supporting decision-making processes. They provide recommendations and insights.

Key Capabilities

- AI agents support decision-making with data-driven recommendations

- Enhanced workflow optimization

- Predictive analytics and trend identification

Human Involvement

Humans retain control while AI aids in optimizing insights and processes.

The final decision always rests with human operators.

Examples:

- Sales forecasting tools

- Content recommendation engines

- Risk assessment platforms

Best for: Organizations wanting data-driven insights. While maintaining human oversight of critical decisions.

Level 3: AI-Integrated (Process-Centric AI) – The Collaborator

Maturity Level

Level 3 represents process-centric AI. Artificial intelligence becomes an integral part of business workflows.

Key Capabilities

- Semi-autonomous AI agents handle complex, multi-step tasks

- Integration with existing business processes

- Advanced problem-solving within defined parameters

Human Involvement

Humans delegate authority in specific areas to AI agents.

They remain actively involved in management and oversight.

Examples:

- Automated customer service resolution

- Supply chain optimization

- Dynamic pricing adjustments

Best for: Established organizations ready to integrate AI deeply. Into core business processes.

Level 4: Independent Operation (Multi-Agent AI Teams) – The Team Player

Maturity Level

Level 4 introduces independent operation through multi-agent AI systems. These can coordinate and collaborate.

Key Capabilities

- AI agents coordinate tasks and make decisions within strategic boundaries

- Multi-agent systems working in harmony

- Autonomous escalation when human intervention is needed

Human Involvement

Humans delegate authority to AI agents.

AI systems escalate to humans only when intervention is required.

Examples:

- Automated trading systems with risk limits

- Smart manufacturing coordination

- Multi-channel marketing campaigns

Best for: Advanced organizations with mature AI infrastructure. Seeking operational efficiency gains.

Level 5: Fully Autonomous (Self-Evolving Systems) – The Independent Operator

Maturity Level

The highest level represents fully autonomous, self-evolving AI systems. Capable of independent operation.

Key Capabilities

- Fully autonomous execution of processes toward specified goals

- Self-learning and adaptation capabilities

- Continuous improvement without human programming

Human Involvement

Humans fully delegate execution authority to AI agents.

Human involvement is limited to goal setting, monitoring, compliance oversight, and strategic governance.

Examples:

- Autonomous vehicle fleets

- Self-managing data centers

- Advanced algorithmic trading systems

Best for: Organizations with sophisticated AI maturity. Seeking maximum automation and efficiency.

Choosing the Right Autonomy Level for Your Business

Assessment Questions

Before implementing AI agents, consider these key questions:

Operational Readiness

- What’s your current level of digital maturity?

- How comfortable is your team with AI-driven processes?

- What’s your risk tolerance for automated decision-making?

Business Requirements

- Which processes consume the most human resources?

- Where do errors have the highest business impact?

- What’s your timeline for AI implementation?

Technical Infrastructure

- Do you have the data quality needed for AI training?

- Is your current technology stack AI-ready?

- What’s your budget for AI implementation and maintenance?

Implementation Best Practices

Start Small, Scale Smart

Begin with Level 1 or 2 implementations in non-critical areas.

This approach allows your team to build confidence and expertise. Before tackling more complex autonomy levels.

Focus on Data Quality

Higher autonomy levels require high-quality, consistent data.

Invest in data governance and cleaning processes early. In your AI journey.

Maintain Human Oversight

Even at higher autonomy levels, maintain clear escalation paths.

Regular human review processes ensure accountability. And continuous improvement.

Plan for Change Management

Each autonomy level requires different skills and mindsets from your team.

Invest in training and change management. To ensure successful adoption.

The Future of AI Agent Autonomy

As AI technology continues advancing, we’ll see more organizations moving toward higher autonomy levels.

However, success depends on careful planning. Proper implementation matters.

The key is finding the autonomy level that maximizes efficiency. While maintaining the control and oversight your business requires.

⚠️ WARNING: Companies that don’t adapt to AI automation risk being left behind by competitors who embrace these technologies strategically.

Conclusion

Understanding AI agent autonomy levels helps you make informed decisions. About AI implementation in your organization.

Whether you’re starting with basic automation or exploring fully autonomous systems. This framework provides a clear roadmap for your AI journey.

Remember: The “best” autonomy level isn’t always the highest one. Choose the level that aligns with your business needs, risk tolerance, and operational maturity.

Ready to implement AI agents in your organization? Start by assessing your current processes. Identify areas where AI assistance could provide immediate value.

Then, gradually progress through the autonomy levels. As your team builds experience and confidence.

This article provides a comprehensive overview of AI agent autonomy levels based on established industry frameworks. For personalized AI implementation guidance, consider consulting with AI strategy experts who can assess your specific business needs.