In the world of AI, there’s a growing myth — that large language models (LLMs) are already intelligent.

They’re not.

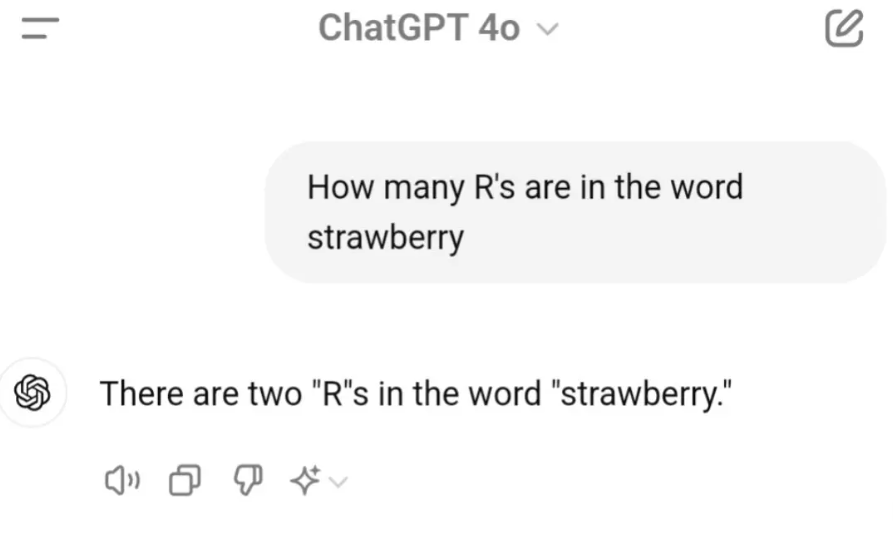

Let me explain with a test I ran this week.

The Setup: A Simple Reasoning Task

I asked a few open-source models a straightforward prompt:

“John Smith was 30 years old 3 years ago. What is John’s age now?”

This isn’t a trick question. It’s elementary time math — the kind we expect any reasoning system to solve easily.

Here’s what happened.

- DeepSeek R1 responded: 33

- LLaMA 3.2 responded: 33

- Cogito 3B… broke.

And I don’t mean it got the answer wrong.

The Breakdown

Cogito didn’t just misfire. It collapsed into a loop.

It began analyzing every word, second-guessing the phrasing, debating the nature of “3 years ago,” and exploring all possible meanings of “as of.”

It asked itself questions like:

- What if “3 years ago” is a reference to the writing date?

- Could the phrase mean he was 3 years old in 2022?

- Was there a typo?

- Is time even real?

It felt less like a model running inference and more like an undergrad overthinking a philosophy exam.

Eventually, it became so confused that I had to manually force stop it. The model couldn’t recover.

The Bigger Point: Intelligence ≠ Language Fluency

This is not a criticism of Cogito specifically — it’s a reminder of the gap that still exists.

LLMs are excellent at language generation. They can summarize, rephrase, autocomplete, and simulate conversation. But reasoning — especially controlled, convergent reasoning — is still fragile.

What we’re seeing isn’t intelligence. It’s statistical mimicry wrapped in good grammar.

DeepSeek and LLaMA got lucky here. But ask them a layered, multi-hop, or slightly ambiguous question and they too will falter — sometimes elegantly, sometimes catastrophically.

Where We Are, Really

This small test reveals something fundamental: most LLMs don’t know when to stop thinking. They don’t yet possess guardrails for converging on the obvious. They’re not “dumb,” but they’re also not what we’d call intelligent.

In human terms, they’re articulate overthinkers — capable of writing essays but unsure whether 30 + 3 = 33.

So no, LLMs aren’t intelligent. Not yet. But they’re fascinatingly close. And sometimes, dangerously confident.

Maybe Apple researchers are right.

Have you seen similar breakdowns in local models? I’d love to hear how they handled basic logic.